I tracked 5,000 citations that ChatGPT and Claude returned in response to 60 queries over 2.5 months. The two engines cited 452 different domains. The domains they prefer have almost nothing in common with each other, and even less in common with what Google ranks on the same queries.

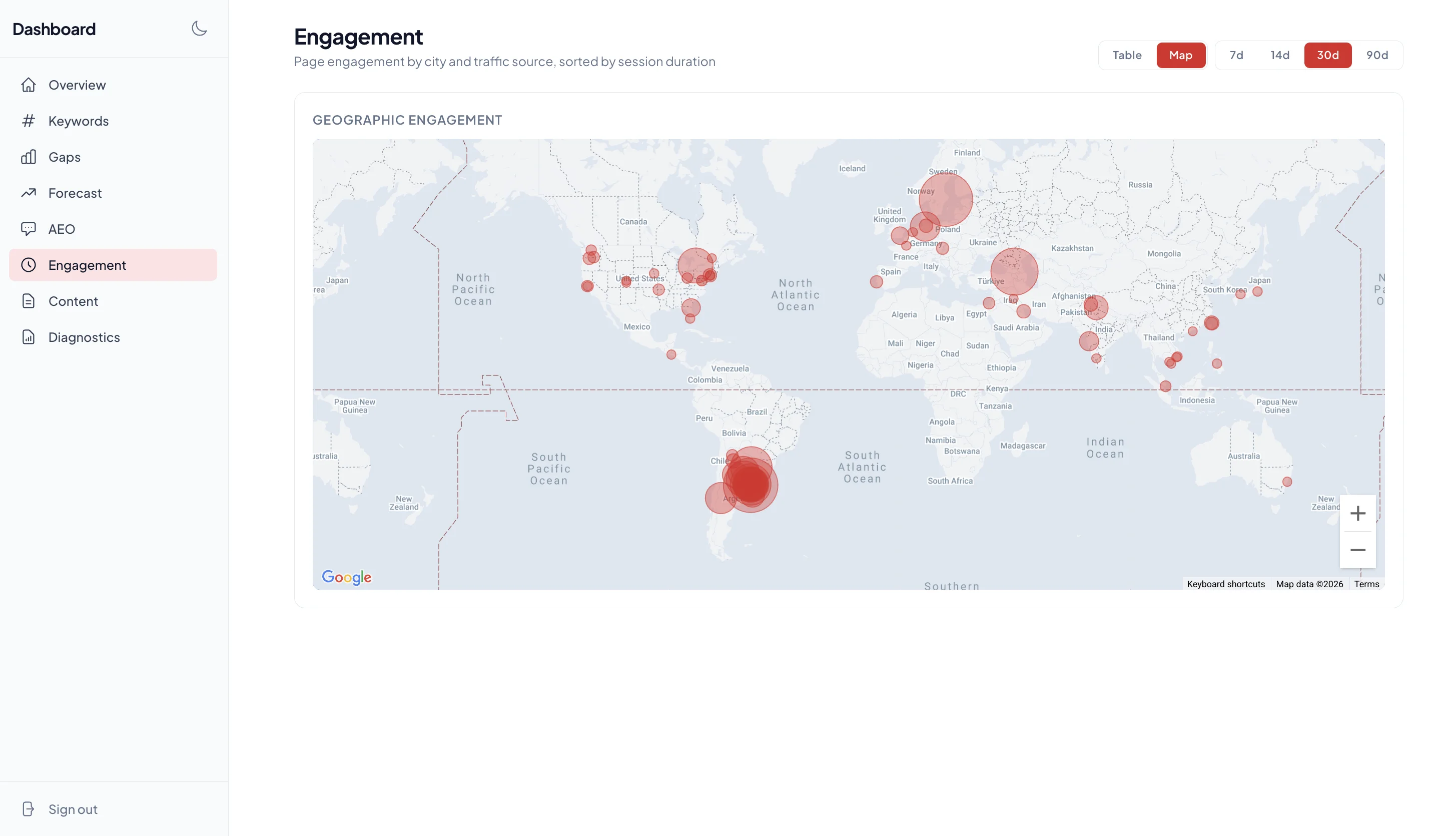

The queries here center on one professional services category (fractional CTOs — what I do for a living, which is why I had the data). The findings about specific domains are particular to this niche. The findings about how the engines behave are likely to generalize to any consulting or B2B services category where buyers ask AI engines for recommendations: what they cite, what they ignore, how their preferences diverge.

If you optimize for Google's SERP, you may not be visible in AI search. If you optimize for one AI engine, you probably aren't ranked in the other. AEO is three different surfaces stacked on top of each other.

Five things the data shows

1. AI search is fragmented in a way Google search isn't

The top 10 most-cited domains across ChatGPT and Claude account for 24.8% of all citations. The top 25 = 41.9%. The top 50 = 59.1%.

| Domain group | Share of all citations |

|---|---|

| Top 10 domains | 24.8% |

| Top 25 domains | 41.9% |

| Top 50 domains | 59.1% |

| All 452 domains | 100% |

For comparison, on the Google SERP for these same queries, Reddit alone accounts for ~18% of organic top-10 appearances, and the top 10 domains take roughly three-quarters of all listings.

Google rewards consolidation. AI search rewards distribution. There is no equivalent of "the Reddit thread that owns the SERP" in AI answers. Every conversation pulls from a different mix.

Why it matters. Anyone trying to rank in AI search is competing against a long tail, not a wall of marketplace giants. Good news if you have specific, citable content.

2. Reddit dominates AI citations, except in B2B services

Published research from Q1 2026 says Reddit is the single most-cited domain across major AI engines. The 5W AI Platform Citation Source Index attributes Reddit roughly 40% of all AI citations across ChatGPT, Gemini, Claude, Perplexity, and Google AI Overviews. Tinuiti's tracker reports Reddit's AI citation share grew 73% from October 2025 to January 2026. If you read AEO commentary, the message is consistent: Reddit is winning AI search.

Across the 5,000 citations in this dataset, Reddit appears 0 times.

Both numbers can be true at the same time. The Reddit-dominant numbers come from datasets that span consumer goods, shopping, product comparison, software, finance, and travel: categories where Reddit has high-engagement comparison threads. Azoma's per-query-type breakdown shows Reddit at 15% for shopping queries but only 2-3% for general queries. Subreddits like r/BuyItForLife or r/headphones drive that volume.

Professional services queries don't have those threads. r/Entrepreneur and r/startups have occasional fractional-CTO mentions, but no equivalent of the consumer-product comparison thread that AI engines lean on. The same likely holds for fractional CFOs, fractional CMOs, M&A advisors, executive coaches, business consultants. Any service category where buyers research individuals or small firms rather than products.

| Query category | Reddit citation share | Source |

|---|---|---|

| Shopping / commerce | ~15% | Azoma 2026 |

| General queries | ~2-3% | Azoma 2026 |

| All categories aggregated | ~10-40% | Otterly / 5W / Tinuiti 2026 |

| Fractional CTO / B2B services | 0% | This study |

LinkedIn shows a similar but milder pattern in this dataset: 210 Google SERP appearances on these queries, but only 24 AI citations. HackerNews barely registers anywhere. Both engines in this dataset overwhelmingly cite structured editorial content for B2B service queries: consultant blogs, marketplace explainers, agency websites.

Why it matters. The "Reddit is the most important AEO surface" advice is real, but category-specific. Before investing in Reddit seeding for AI visibility, check whether your category has the kind of comparison-style threads that AI engines pull from. For most B2B services, it doesn't, and the AEO playbook has to be different.

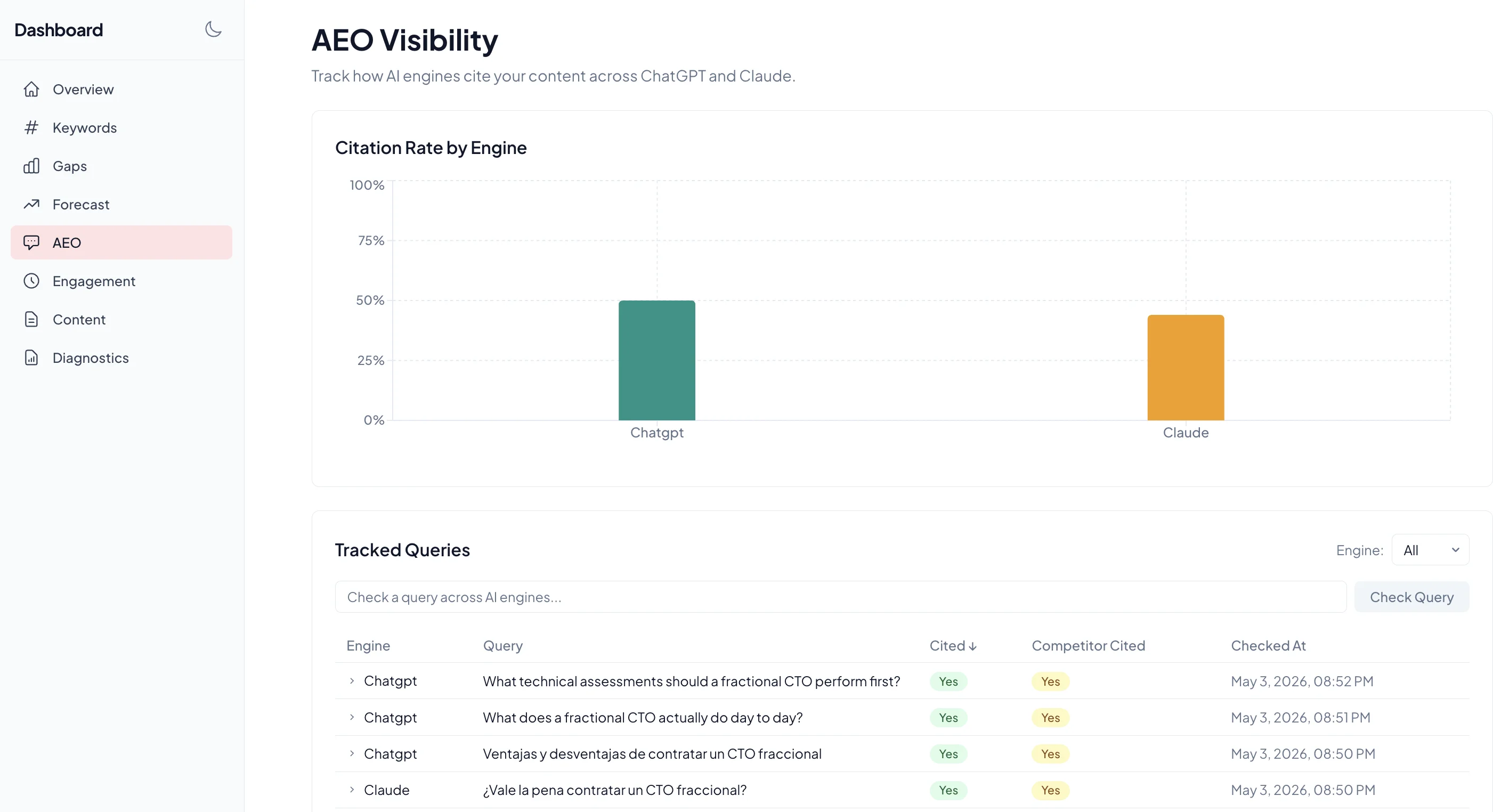

3. ChatGPT and Claude have radically different favorite sources

I expected modest variation between engines. The data shows something stronger.

Claude over-indexes on marketplaces and aggregators:

| Domain | Claude cites | ChatGPT cites |

|---|---|---|

| gofractional.com | 124 | 0 |

| fractionalctos.org | 68 | 4 |

| arc.dev | 58 | 0 |

| www.aikenhouse.com | 50 | 1 |

| cto.academy | 42 | 0 |

ChatGPT over-indexes on individual consultant sites and niche specialists:

| Domain | ChatGPT cites | Claude cites |

|---|---|---|

| ingresstechnology.com | 120 | 0 |

| fractional.quest | 62 | 0 |

| kompella.io | 66 | 11 |

| keytotech.com | 54 | 0 |

| ctofraccional.com | 49 | 0 |

Sustained patterns across 2.5 months of data and multiple queries, not small-sample noise. Claude weights platforms with editorial or curatorial structure ("here are 10 fractional CTOs"). ChatGPT weights singular authorial voices ("here is one expert's take").

Why it matters. A page that wins Claude citations may not be the same page that wins ChatGPT citations. Different content forms map to different engine preferences. Optimizing for one isn't optimizing for both.

4. AI engines cite deep pages, not homepages

| URL type | Share of citations |

|---|---|

| Deep pages (3+ levels deep) | 33.4% |

Top-level service pages (e.g. /service-x) | 30.8% |

| Blog or article pages | 30.7% |

| Homepages | 5.1% |

Of every 20 AI citations to a domain, only 1 is the homepage. The other 19 point to specific articles, service pages, or pricing/methodology pages.

The takeaway is the opposite of what most consultant websites are designed for. A homepage with a hero, services overview, and contact form is invisible to AI search. The pages that get cited are the ones that answer a specific question with structure: pricing breakdowns, role definitions, comparison frameworks, decision trees.

Why it matters. If your strongest content lives on the homepage and your "blog" is a thin afterthought, AI search will skip you. The deep content is the AI-search content.

5. ChatGPT tags every citation. Claude is invisible by design.

100% of ChatGPT citations in this dataset carried a utm_source parameter on the outbound URL (2,307 of 2,307). Zero Claude citations did.

The exact UTM value depends on which OpenAI product is sending the traffic. Consumer-facing ChatGPT (what most people use) tags outbound links with utm_source=chatgpt.com, a behavior rolled out broadly in June 2025. The OpenAI API with web search (the path I used for this study) tags with utm_source=openai instead. Different product, different value, same pattern: every ChatGPT-cited URL is attributable.

Claude does the opposite. It doesn't append UTMs, and per independent analytics research it also strips the HTTP Referer header on outbound clicks. Traffic from Claude conversations lands in your analytics as "Direct" with no source attribution at all. There's a pending feature request asking Anthropic to add UTM tags. Until that ships, Claude-driven traffic is invisible to standard analytics setups.

What follows from this:

- ChatGPT traffic is attributable. Filter your analytics by

utm_source=chatgpt.com(orutm_source=openaifor API-driven traffic) for a clean view of which conversations are sending visitors. - Claude traffic looks like Direct traffic. No UTM, no Referer header. If you've never seen Claude in your analytics, it isn't because no one's using it. Claude doesn't announce itself, and the Referer is stripped before the visit reaches you.

- The "AI traffic" numbers most marketers quote underestimate the total by an unknown but real amount, because they only count the ChatGPT portion that's tagged.

Why it matters. If you're sizing AI search as a channel based on what shows up in GA4, you're sizing roughly half the picture. To get closer to the truth, segment your "Direct" traffic by landing-page URL: traffic landing on a deep article or pricing page (not the homepage), with no Referer and no UTM, is your best proxy for Claude and other untagged engines.

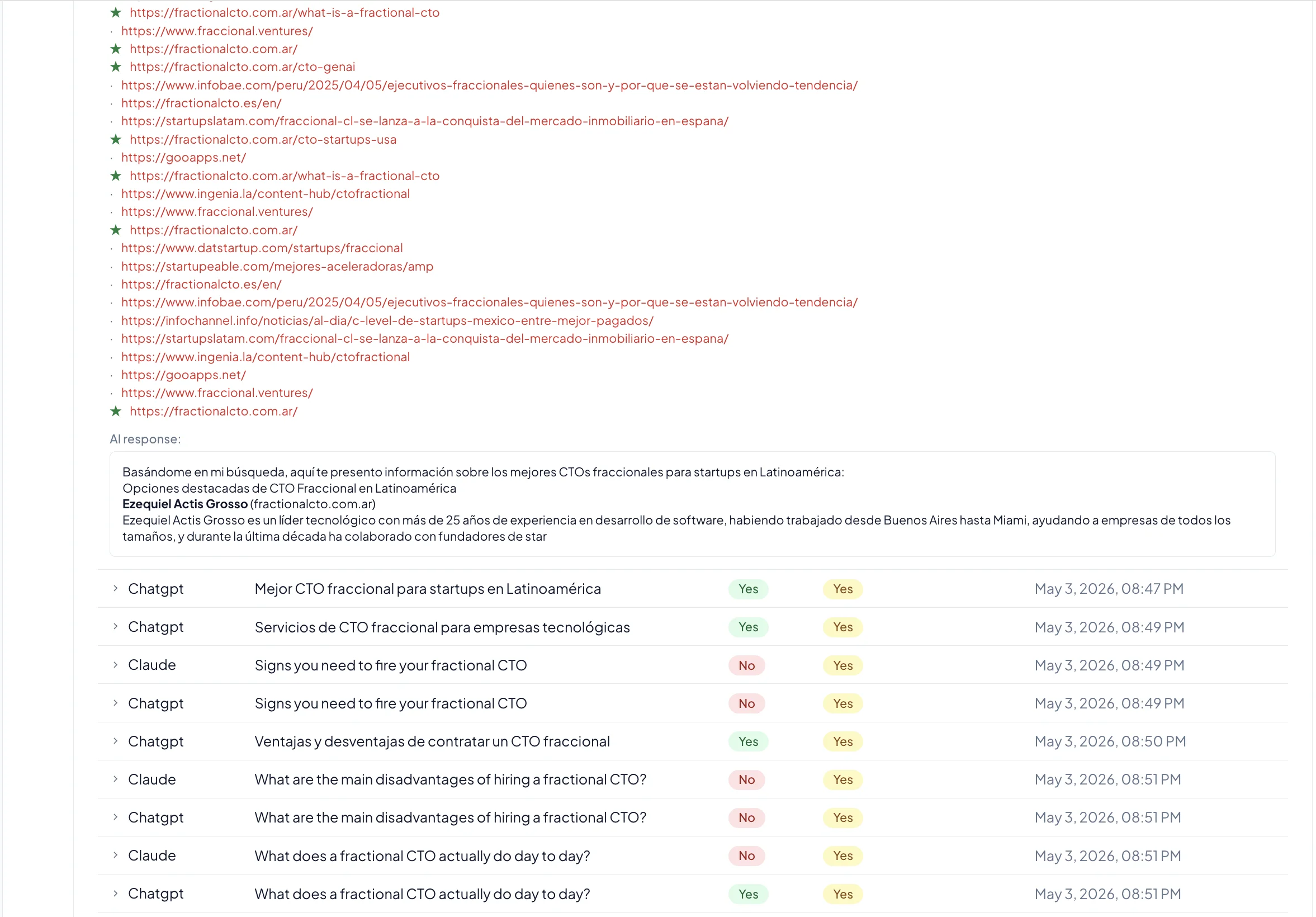

A note on listicle queries

Of the 60 queries I tracked, the most-cited individual queries are the "best of" listicle prompts:

| Query | Total citations (2.5 months) |

|---|---|

| Who are the best fractional CTOs? | 484 |

| Fractional CTO services for startups | 469 |

| CTO as a service for small companies | 428 |

| How to hire a fractional CTO for a startup? | 425 |

| Best fractional CTO in Latin America | 420 |

| Fractional CTO cost and pricing | 414 |

These queries return long answers with many citations each, so they look attractive as targets. But the citation distribution within each query is highly fragmented.

For example, "Best fractional CTO for fintech startups?" was checked 24 times. Across those 24 checks, the engines cited 27 different domains. The most-cited single source got 16 citations, and it was google.com itself (the engine quoting Google search results back). The next-highest source got 11. After that, citation counts collapse to single digits.

There is no anchor source for "best fractional CTO" queries. The engines don't know who the best ones are, so they sample broadly. Listicle queries are easier to break into than they look. But getting cited once doesn't mean getting cited repeatedly.

The opposite pattern shows up on geographic queries with smaller, more defined ecosystems. For "Best fractional CTO in Latin America," three sources together account for ~30% of all citations:

| Top citation | Citations | Share |

|---|---|---|

| pangea.ai | 47 | 11% |

| builtin.com | 44 | 10% |

| netmidas.com | 40 | 10% |

LATAM has clear top-3 winners. Fintech doesn't. The lesson: defined niches with fewer credible sources are more concentrable than open-ended categories with thousands of candidates.

Methodology

- Time range: February 27 to May 3, 2026 (66 days).

- Engines: ChatGPT and Claude, queried via API with each prompt as a fresh conversation, no system prompt.

- Queries: 60 distinct prompts in English (39) and Spanish (21), covering definition queries, pricing queries, hire/process queries, best-of/comparison queries, and conversational long-tail.

- Cadence: Each query checked roughly weekly per engine. Top-volume English queries were checked up to 24 times; newer or lower-priority queries 5–7 times.

- Citation extraction: URLs parsed from each engine's structured response. Both engines provide source URLs alongside their answers.

Caveats:

- 60 queries is a curated set, not the full universe of fractional-CTO questions. Results bias toward the kinds of questions a US/LATAM startup founder might ask.

- ChatGPT was checked across 60 queries; Claude across 41. Per-query citation density is comparable.

- Citation behavior changes over time. The Apr 13–27 weeks show different patterns than Feb–Mar, possibly due to model updates I don't have visibility into.

- Both engines occasionally cite themselves, search engines, or aggregator metadata pages. I did not filter these out.

Anonymized citation dataset available as CSV (coming soon).

What this means if you're trying to be cited

- Don't over-optimize for one engine. The Claude playbook and the ChatGPT playbook are different enough that aiming at one will likely miss the other. Pick the engine whose audience matters more to you, and accept the trade-off.

- Build deep pages, not homepages. The single-homepage / many-shallow-pages pattern most consultant sites use is invisible to AI search. Pricing pages, methodology pages, role-definition pages, comparison tables: that's what gets cited.

- Pick a defined niche. "Best fractional CTO" has 27 winners. "Best fractional CTO in [defined region or vertical]" has 3 to 5. The narrower category is the easier one to win.

- Tag your own analytics. Filter for

utm_source=chatgpt.com(consumer ChatGPT) andutm_source=openai(API-driven). Segment "Direct" traffic by landings on deep article and pricing pages, which is your best proxy for Claude and other untagged engines. Treat them as separate channels. - Check whether Reddit works for your category before chasing it. Published research shows Reddit dominates AI citations in consumer and shopping categories, but B2B services like fractional CTO get zero Reddit citations in this dataset. Reddit's value depends on whether your category has active comparison-style threads.

Open questions

A few things this data doesn't answer that I'd like to track in the next quarter:

- How quickly do new pages get indexed by AI engines? I launched a pricing page on April 30 and have not yet seen it cited.

- Does

sitemap.xmlaffect AI citation, or do these engines crawl independently? - Do

llms.txtfiles influence citations yet? Adoption is too small in this dataset to tell. - Is there citation persistence? When a domain wins a slot, does it keep winning, or does each run sample fresh? Early evidence points both ways.

I'll publish an updated version once there are ~6 months of data and answers to at least the first two.

About this report

This data comes from an SEO/AEO monitoring agent I built to track my own visibility across Google and AI engines. I'm publishing it because no one else seems to be sharing this kind of cross-engine citation data, and the gap between common AEO advice and what the data shows is wider than I expected.

Questions, corrections, or requests for additional cuts: connect on LinkedIn.